Jul 07, 2019 If you work with Linux, you probably have a hard drive or two formatted with Ext4 or a related filesystem. Assuming you only work with Linux, that isn’t a problem. When you need to access data from that Ext4 filesystem on another operating system, you start to run into trouble. Macs, for example, don’t support Ext4 filesystems. Nov 01, 2016 This issue has is more appropriate name then #188. There is also alike issue in docker for mac: docker/for-mac#77 I also experience this for example with git, when I attach as volume some folder and make git operations on it, with quite large code base speed of operation is significantly slower then on dev machine. Mar 24, 2016 docker info Containers: 2 Images: 3 Server Version: 1.9.1 Storage Driver: devicemapper Pool Name: docker-254:1-4458480-pool Pool Blocksize: 65.54 kB Base Device Size: 10.74 GB Backing Filesystem: Data file: /dev/loop0 Metadata file: /dev/loop1 Data Space Used: 2.52 GB Data Space Total: 107.4 GB Data Space Available: 96.38 GB Metadata Space Used.

- Docker For Mac Slow Ext4 Partition Usb

- Docker For Mac Slow Ext4 Partition Recovery Software

- Windows Partition Manager Ext4

Estimated reading time: 11 minutes

Btrfs is a next generation copy-on-write filesystem that supports many advancedstorage technologies that make it a good fit for Docker. Btrfs is included inthe mainline Linux kernel.

Docker’s

btrfs storage driver leverages many Btrfs features for image andcontainer management. Among these features are block-level operations, thinprovisioning, copy-on-write snapshots, and ease of administration. You caneasily combine multiple physical block devices into a single Btrfs filesystem.This article refers to Docker’s Btrfs storage driver as

btrfs and the overallBtrfs Filesystem as Btrfs.Note: The

btrfs storage driver is only supported on Docker Engine - Community on Ubuntu or Debian.Prerequisites

btrfs is supported if you meet the following prerequisites:- Docker Engine - Community: For Docker Engine - Community,

btrfsis only recommended on Ubuntu or Debian. - Changing the storage driver makes any containers you have alreadycreated inaccessible on the local system. Use

docker saveto save containers,and push existing images to Docker Hub or a private repository, so that younot need to re-create them later. btrfsrequires a dedicated block storage device such as a physical disk. Thisblock device must be formatted for Btrfs and mounted into/var/lib/docker/.The configuration instructions below walk you through this procedure. Bydefault, the SLES/filesystem is formatted with BTRFS, so for SLES, you donot need to use a separate block device, but you can choose to do so forperformance reasons.btrfssupport must exist in your kernel. To check this, run the followingcommand:- To manage BTRFS filesystems at the level of the operating system, you need the

btrfscommand. If you do not have this command, install thebtrfsprogspackage (SLES) orbtrfs-toolspackage (Ubuntu).

Configure Docker to use the btrfs storage driver

This procedure is essentially identical on SLES and Ubuntu.

- Stop Docker.

- Copy the contents of

/var/lib/docker/to a backup location, then emptythe contents of/var/lib/docker/: - Format your dedicated block device or devices as a Btrfs filesystem. Thisexample assumes that you are using two block devices called

/dev/xvdfand/dev/xvdg. Double-check the block device names because this is adestructive operation.There are many more options for Btrfs, including striping and RAID. See theBtrfs documentation. - Mount the new Btrfs filesystem on the

/var/lib/docker/mount point. Youcan specify any of the block devices used to create the Btrfs filesystem.Don’t forget to make the change permanent across reboots by adding anentry to/etc/fstab. - Copy the contents of

/var/lib/docker.bkto/var/lib/docker/. - Configure Docker to use the

btrfsstorage driver. This is required eventhough/var/lib/docker/is now using a Btrfs filesystem.Edit or create the file/etc/docker/daemon.json. If it is a new file, addthe following contents. If it is an existing file, add the key and valueonly, being careful to end the line with a comma if it is not the finalline before an ending curly bracket (}).See all storage options for each storage driver in thedaemon reference documentation - Start Docker. After it is running, verify that

btrfsis being used as thestorage driver. - When you are ready, remove the

/var/lib/docker.bkdirectory.

Manage a Btrfs volume

One of the benefits of Btrfs is the ease of managing Btrfs filesystems withoutthe need to unmount the filesystem or restart Docker.

When space gets low, Btrfs automatically expands the volume in chunks ofroughly 1 GB.

To add a block device to a Btrfs volume, use the

btrfs device add andbtrfs filesystem balance commands.Note: While you can do these operations with Docker running, performancesuffers. It might be best to plan an outage window to balance the Btrfsfilesystem.

How the btrfs storage driver works

The

btrfs storage driver works differently from devicemapper or otherstorage drivers in that your entire /var/lib/docker/ directory is stored on aBtrfs volume.Image and container layers on-disk

Information about image layers and writable container layers is stored in

/var/lib/docker/btrfs/subvolumes/. This subdirectory contains one directoryper image or container layer, with the unified filesystem built from a layerplus all its parent layers. Subvolumes are natively copy-on-write and have spaceallocated to them on-demand from an underlying storage pool. They can also benested and snapshotted. The diagram below shows 4 subvolumes. ‘Subvolume 2’ and‘Subvolume 3’ are nested, whereas ‘Subvolume 4’ shows its own internal directorytree.Only the base layer of an image is stored as a true subvolume. All the otherlayers are stored as snapshots, which only contain the differences introducedin that layer. You can create snapshots of snapshots as shown in the diagrambelow.

On disk, snapshots look and feel just like subvolumes, but in reality they aremuch smaller and more space-efficient. Copy-on-write is used to maximize storageefficiency and minimize layer size, and writes in the container’s writable layerare managed at the block level. The following image shows a subvolume and itssnapshot sharing data.

For maximum efficiency, when a container needs more space, it is allocated inchunks of roughly 1 GB in size.

Docker’s

btrfs storage driver stores every image layer and container in itsown Btrfs subvolume or snapshot. The base layer of an image is stored as asubvolume whereas child image layers and containers are stored as snapshots.This is shown in the diagram below.The high level process for creating images and containers on Docker hostsrunning the

btrfs driver is as follows:- The image’s base layer is stored in a Btrfs subvolume under

/var/lib/docker/btrfs/subvolumes. - Subsequent image layers are stored as a Btrfs snapshot of the parentlayer’s subvolume or snapshot, but with the changes introduced by thislayer. These differences are stored at the block level.

- The container’s writable layer is a Btrfs snapshot of the final image layer,with the differences introduced by the running container. These differencesare stored at the block level.

How container reads and writes work with btrfs

Reading files

A container is a space-efficient snapshot of an image. Metadata in the snapshotpoints to the actual data blocks in the storage pool. This is the same as witha subvolume. Therefore, reads performed against a snapshot are essentially thesame as reads performed against a subvolume.

Writing files

- Writing new files: Writing a new file to a container invokes an allocate-on-demandoperation to allocate new data block to the container’s snapshot. The file isthen written to this new space. The allocate-on-demand operation is native toall writes with Btrfs and is the same as writing new data to a subvolume. As aresult, writing new files to a container’s snapshot operates at native Btrfsspeeds.

- Modifying existing files: Updating an existing file in a container is a copy-on-writeoperation (redirect-on-write is the Btrfs terminology). The original data isread from the layer where the file currently exists, and only the modifiedblocks are written into the container’s writable layer. Next, the Btrfs driverupdates the filesystem metadata in the snapshot to point to this new data.This behavior incurs very little overhead.

- Deleting files or directories: If a container deletes a file or directorythat exists in a lower layer, Btrfs masks the existence of the file ordirectory in the lower layer. If a container creates a file and then deletesit, this operation is performed in the Btrfs filesystem itself and the spaceis reclaimed.

With Btrfs, writing and updating lots of small files can result in slowperformance.

Btrfs and Docker performance

There are several factors that influence Docker’s performance under the

btrfsstorage driver.Note: Many of these factors are mitigated by using Docker volumes forwrite-heavy workloads, rather than relying on storing data in the container’swritable layer. However, in the case of Btrfs, Docker volumes still sufferfrom these draw-backs unless

/var/lib/docker/volumes/ is not backed byBtrfs.- Page caching. Btrfs does not support page cache sharing. This means thateach process accessing the same file copies the file into the Docker hosts’smemory. As a result, the

btrfsdriver may not be the best choicehigh-density use cases such as PaaS. - Small writes. Containers performing lots of small writes (this usagepattern matches what happens when you start and stop many containers in a shortperiod of time, as well) can lead to poor use of Btrfs chunks. This canprematurely fill the Btrfs filesystem and lead to out-of-space conditions onyour Docker host. Use

btrfs filesys showto closely monitor the amount offree space on your Btrfs device. - Sequential writes. Tinyumbrella 7.04.00. Btrfs uses a journaling technique when writing to disk.This can impact the performance of sequential writes, reducing performance byup to 50%.

- Fragmentation. Fragmentation is a natural byproduct of copy-on-writefilesystems like Btrfs. Many small random writes can compound this issue.Fragmentation can manifest as CPU spikes when using SSDs or head thrashingwhen using spinning disks. Either of these issues can harm performance.If your Linux kernel version is 3.9 or higher, you can enable the

autodefragfeature when mounting a Btrfs volume. Toshiba c660 drivers windows 10. Test this feature on your own workloadsbefore deploying it into production, as some tests have shown a negativeimpact on performance. - SSD performance: Btrfs includes native optimizations for SSD media.To enable these features, mount the Btrfs filesystem with the

-o ssdmountoption. These optimizations include enhanced SSD write performance by avoidingoptimization such as seek optimizations which do not apply to solid-statemedia. - Balance Btrfs filesystems often: Use operating system utilities such as a

cronjob to balance the Btrfs filesystem regularly, during non-peak hours.This reclaims unallocated blocks and helps to prevent the filesystem fromfilling up unnecessarily. You cannot rebalance a totally full Btrfsfilesystem unless you add additional physical block devices to the filesystem.See theBTRFS Wiki. - Use fast storage: Solid-state drives (SSDs) provide faster reads andwrites than spinning disks.

- Use volumes for write-heavy workloads: Volumes provide the best and mostpredictable performance for write-heavy workloads. This is because they bypassthe storage driver and do not incur any of the potential overheads introducedby thin provisioning and copy-on-write. Volumes have other benefits, such asallowing you to share data among containers and persisting even when norunning container is using them.

Related Information

container, storage, driver, BtrfsEstimated reading time: 28 minutesDevice Mapper is a kernel-based framework that underpins many advancedvolume management technologies on Linux. Docker’s

devicemapper storage driverleverages the thin provisioning and snapshotting capabilities of this frameworkfor image and container management. This article refers to the Device Mapperstorage driver as devicemapper, and the kernel framework as Device Mapper.For the systems where it is supported,

devicemapper support is included inthe Linux kernel. However, specific configuration is required to use it withDocker.The

devicemapper driver uses block devices dedicated to Docker and operates atthe block level, rather than the file level. These devices can be extended byadding physical storage to your Docker host, and they perform better than usinga filesystem at the operating system (OS) level.Prerequisites

This, for example, means that only the boot partition created to install the system appears when the Raspberry MicroSD is installed. So, on this tutorial, I will explain how to mount a Linux partition EXT4, EXT3, EXT2 to Windows 10, 8, 7. Some of the reasons for mounting Linux drives in Windows are: Create dual-boot systems (Windows and Linux). Then, copy all the data from the ext4 partition to the btrfs partition: cp -ax is your. Both readable and writable on any modern Mac or Windows machine (sorry. Usage but also it was terribly slow on my root subvolume where docker uses.

devicemapperstorage driver is a supported storage driver for DockerEE on many OS distribution. See theProduct compatibility matrix for details.devicemapperis also supported on Docker Engine - Community running on CentOS, Fedora,Ubuntu, or Debian.- Changing the storage driver makes any containers you have alreadycreated inaccessible on the local system. Use

docker saveto save containers,and push existing images to Docker Hub or a private repository, so you donot need to recreate them later.

Configure Docker with the devicemapper storage driver

Before following these procedures, you must first meet all theprerequisites.

Configure loop-lvm mode for testing

This configuration is only appropriate for testing. The

loop-lvm mode makesuse of a ‘loopback’ mechanism that allows files on the local disk to beread from and written to as if they were an actual physical disk or blockdevice.However, the addition of the loopback mechanism, and interaction with the OSfilesystem layer, means that IO operations can be slow and resource-intensive.Use of loopback devices can also introduce race conditions.However, setting up loop-lvm mode can help identify basic issues (such asmissing user space packages, kernel drivers, etc.) ahead of attempting the morecomplex set up required to enable direct-lvm mode. loop-lvm mode shouldtherefore only be used to perform rudimentary testing prior to configuringdirect-lvm.For production systems, seeConfigure direct-lvm mode for production.

- Stop Docker.

- Edit

/etc/docker/daemon.json. If it does not yet exist, create it. Assumingthat the file was empty, add the following contents.See all storage options for each storage driver:Docker does not start if thedaemon.jsonfile contains badly-formed JSON. - Start Docker.

- Verify that the daemon is using the

devicemapperstorage driver. Use thedocker infocommand and look forStorage Driver.

This host is running in

loop-lvm mode, which is not supported on production systems. This is indicated by the fact that the Data loop file and a Metadata loop file are on files under /var/lib/docker/devicemapper. These are loopback-mounted sparse files. For production systems, see Configure direct-lvm mode for production.Configure direct-lvm mode for production

Production hosts using the

devicemapper storage driver must use direct-lvmmode. This mode uses block devices to create the thin pool. This is faster thanusing loopback devices, uses system resources more efficiently, and blockdevices can grow as needed. However, more setup is required than in loop-lvmmode.After you have satisfied the prerequisites, follow the stepsbelow to configure Docker to use the

devicemapper storage driver indirect-lvm mode.Warning: Changing the storage driver makes any containers you have already created inaccessible on the local system. Use

docker save to save containers, and push existing images to Docker Hub or a private repository, so you do not need to recreate them later.Allow Docker to configure direct-lvm mode

With Docker

17.06 and higher, Docker can manage the block device for you,simplifying configuration of direct-lvm mode. This is appropriate for fresh Docker setups only. You can only use a single block device. If you need touse multiple block devices, configure direct-lvm modemanually instead. The following newconfiguration options have been added:| Option | Description | Required? | Default | Example |

|---|---|---|---|---|

dm.directlvm_device | The path to the block device to configure for direct-lvm. | Yes | dm.directlvm_device='/dev/xvdf' | |

dm.thinp_percent | The percentage of space to use for storage from the passed in block device. | No | 95 | dm.thinp_percent=95 |

dm.thinp_metapercent | The percentage of space to use for metadata storage from the passed-in block device. | No | 1 | dm.thinp_metapercent=1 |

dm.thinp_autoextend_threshold | The threshold for when lvm should automatically extend the thin pool as a percentage of the total storage space. | No | 80 | dm.thinp_autoextend_threshold=80 |

dm.thinp_autoextend_percent | The percentage to increase the thin pool by when an autoextend is triggered. | No | 20 | dm.thinp_autoextend_percent=20 |

dm.directlvm_device_force | Whether to format the block device even if a filesystem already exists on it. If set to false and a filesystem is present, an error is logged and the filesystem is left intact. | No | false | dm.directlvm_device_force=true |

Edit the

daemon.json file and set the appropriate options, then restart Dockerfor the changes to take effect. The following daemon.json configuration sets all of theoptions in the table above.See all storage options for each storage driver:

Restart Docker for the changes to take effect. Docker invokes the commands toconfigure the block device for you.

Warning: Changing these values after Docker has prepared the block devicefor you is not supported and causes an error.

You still need to perform periodic maintenance tasks.

Configure direct-lvm mode manually

The procedure below creates a logical volume configured as a thin pool touse as backing for the storage pool. It assumes that you have a spare blockdevice at

/dev/xvdf with enough free space to complete the task. The deviceidentifier and volume sizes may be different in your environment and youshould substitute your own values throughout the procedure. The procedure alsoassumes that the Docker daemon is in the stopped state.- Identify the block device you want to use. The device is located under

/dev/(such as/dev/xvdf) and needs enough free space to store theimages and container layers for the workloads that host runs.A solid state drive is ideal. - Stop Docker.

- Install the following packages:

- RHEL / CentOS:

device-mapper-persistent-data,lvm2, and alldependencies - Ubuntu / Debian:

thin-provisioning-tools,lvm2, and alldependencies

- Create a physical volume on your block device from step 1, using the

pvcreatecommand. Substitute your device name for/dev/xvdf.Warning: The next few steps are destructive, so be sure that you havespecified the correct device! - Create a

dockervolume group on the same device, using thevgcreatecommand. - Create two logical volumes named

thinpoolandthinpoolmetausing thelvcreatecommand. The last parameter specifies the amount of free spaceto allow for automatic expanding of the data or metadata if space runs low,as a temporary stop-gap. These are the recommended values. - Convert the volumes to a thin pool and a storage location for metadata forthe thin pool, using the

lvconvertcommand. - Configure autoextension of thin pools via an

lvmprofile. - Specify

thin_pool_autoextend_thresholdandthin_pool_autoextend_percentvalues.thin_pool_autoextend_thresholdis the percentage of space used beforelvmattempts to autoextend the available space (100 = disabled, not recommended).thin_pool_autoextend_percentis the amount of space to add to the devicewhen automatically extending (0 = disabled).The example below adds 20% more capacity when the disk usage reaches80%.Save the file. - Apply the LVM profile, using the

lvchangecommand. - Ensure monitoring of the logical volume is enabled.If the output in the

Monitorcolumn reports, as above, that the volume isnot monitored, then monitoring needs to be explicitly enabled. Withoutthis step, automatic extension of the logical volume will not occur,regardless of any settings in the applied profile.Double check that monitoring is now enabled by running thesudo lvs -o+seg_monitorcommand a second time. TheMonitorcolumnshould now report the logical volume is beingmonitored. - If you have ever run Docker on this host before, or if

/var/lib/docker/exists, move it out of the way so that Docker can use the new LVM pool tostore the contents of image and containers.If any of the following steps fail and you need to restore, you can remove/var/lib/dockerand replace it with/var/lib/docker.bk. - Edit

/etc/docker/daemon.jsonand configure the options needed for thedevicemapperstorage driver. If the file was previously empty, it shouldnow contain the following contents: - Start Docker.systemd:service:

- Verify that Docker is using the new configuration using

docker info.If Docker is configured correctly, theData fileandMetadata fileisblank, and the pool name isdocker-thinpool. - After you have verified that the configuration is correct, you can remove the

/var/lib/docker.bkdirectory which contains the previous configuration.

Manage devicemapper

Monitor the thin pool

Do not rely on LVM auto-extension alone. The volume groupautomatically extends, but the volume can still fill up. You can monitorfree space on the volume using

lvs or lvs -a. Consider using a monitoringtool at the OS level, such as Nagios.Docker For Mac Slow Ext4 Partition Usb

To view the LVM logs, you can use

journalctl:If you run into repeated problems with thin pool, you can set the storage option

dm.min_free_space to a value (representing a percentage) in/etc/docker/daemon.json. For instance, setting it to 10 ensuresthat operations fail with a warning when the free space is at or near 10%.See thestorage driver options in the Engine daemon reference.Increase capacity on a running device

You can increase the capacity of the pool on a running thin-pool device. This isuseful if the data’s logical volume is full and the volume group is at fullcapacity. The specific procedure depends on whether you are using aloop-lvm thin pool or adirect-lvm thin pool.

Docker For Mac Slow Ext4 Partition Recovery Software

Resize a loop-lvm thin pool

The easiest way to resize a

loop-lvm thin pool is touse the device_tool utility,but you can use operating system utilitiesinstead.Use the device_tool utility

A community-contributed script called

device_tool.go is available in themoby/mobyGithub repository. You can use this tool to resize a loop-lvm thin pool,avoiding the long process above. This tool is not guaranteed to work, but youshould only be using loop-lvm on non-production systems.If you do not want to use

device_tool, you can resize the thin pool manually instead.- To use the tool, clone the Github repository, change to the

contrib/docker-device-tool, and follow the instructions in theREADME.mdto compile the tool. - Use the tool. The following example resizes the thin pool to 200GB.

Use operating system utilities

If you do not want to use the device-tool utility,you can resize a

loop-lvm thin pool manually using the following procedure.In

loop-lvm mode, a loopback device is used to store the data, and anotherto store the metadata. loop-lvm mode is only supported for testing, becauseit has significant performance and stability drawbacks.If you are using

loop-lvm mode, the output of docker info shows filepaths for Data loop file and Metadata loop file:Follow these steps to increase the size of the thin pool. In this example, thethin pool is 100 GB, and is increased to 200 GB.

- List the sizes of the devices.

- Increase the size of the

datafile to 200 G using thetruncatecommand,which is used to increase or decrease the size of a file. Note thatdecreasing the size is a destructive operation. - Verify the file size changed.

- The loopback file has changed on disk but not in memory. List the size ofthe loopback device in memory, in GB. Reload it, then list the size again.After the reload, the size is 200 GB.

- Reload the devicemapper thin pool.a. Get the pool name first. The pool name is the first field, delimited by ` :`. This command extracts it.b. Dump the device mapper table for the thin pool.c. Calculate the total sectors of the thin pool using the second field of the output. The number is expressed in 512-k sectors. A 100G file has 209715200 512-k sectors. If you double this number to 200G, you get 419430400 512-k sectors.d. Reload the thin pool with the new sector number, using the following three

dmsetupcommands.

Resize a direct-lvm thin pool

To extend a

direct-lvm thin pool, you need to first attach a new block deviceto the Docker host, and make note of the name assigned to it by the kernel. Inthis example, the new block device is /dev/xvdg.Follow this procedure to extend a

direct-lvm thin pool, substituting yourblock device and other parameters to suit your situation.- Gather information about your volume group.Use the

pvdisplaycommand to find the physical block devices currently inuse by your thin pool, and the volume group’s name.In the following steps, substitute your block device or volume group name asappropriate. - Extend the volume group, using the

vgextendcommand with theVG Namefrom the previous step, and the name of your new block device. - Extend the

docker/thinpoollogical volume. This command uses 100% of thevolume right away, without auto-extend. To extend the metadata thinpoolinstead, usedocker/thinpool_tmeta. - Verify the new thin pool size using the

Data Space Availablefield in theoutput ofdocker info. If you extended thedocker/thinpool_tmetalogicalvolume instead, look forMetadata Space Available.

Activate the devicemapper after reboot

If you reboot the host and find that the

docker service failed to start,look for the error, “Non existing device”. You need to re-activate thelogical volumes with this command:How the devicemapper storage driver works

Warning: Do not directly manipulate any files or directories within

/var/lib/docker/. These files and directories are managed by Docker.Use the

lsblk command to see the devices and their pools, from the operatingsystem’s point of view:Use the

mount command to see the mount-point Docker is using:When you use

devicemapper, Docker stores image and layer contents in thethinpool, and exposes them to containers by mounting them undersubdirectories of /var/lib/docker/devicemapper/.Image and container layers on-disk

The

/var/lib/docker/devicemapper/metadata/ directory contains metadata aboutthe Devicemapper configuration itself and about each image and container layerthat exist. The devicemapper storage driver uses snapshots, and this metadatainclude information about those snapshots. These files are in JSON format.The

/var/lib/docker/devicemapper/mnt/ directory contains a mount point for each imageand container layer that exists. Image layer mount points are empty, but acontainer’s mount point shows the container’s filesystem as it appears fromwithin the container.Image layering and sharing

The

devicemapper storage driver uses dedicated block devices rather thanformatted filesystems, and operates on files at the block level for maximumperformance during copy-on-write (CoW) operations.Snapshots

Another feature of

devicemapper is its use of snapshots (also sometimes calledthin devices or virtual devices), which store the differences introduced ineach layer as very small, lightweight thin pools. Snapshots provide manybenefits:- Layers which are shared in common between containers are only stored on diskonce, unless they are writable. For instance, if you have 10 differentimages which are all based on

alpine, thealpineimage and all itsparent images are only stored once each on disk.Acpi sm08800 1 dell driver. Is there a link to the correct driver for this for W10. Go to the support site, 2. Choose your model or enter your service tag. Choose Drivers and Downloads. Click on Chipset to expand the list and download the ST Microelectronics Free Fall Sensor driver. Extract and Install the driver. How to Update Through Support Assist. - Snapshots are an implementation of a copy-on-write (CoW) strategy. This meansthat a given file or directory is only copied to the container’s writablelayer when it is modified or deleted by that container.

- Because

devicemapperoperates at the block level, multiple blocks in awritable layer can be modified simultaneously. - Snapshots can be backed up using standard OS-level backup utilities. Justmake a copy of

/var/lib/docker/devicemapper/.

Devicemapper workflow

When you start Docker with the

devicemapper storage driver, all objectsrelated to image and container layers are stored in/var/lib/docker/devicemapper/, which is backed by one or more block-leveldevices, either loopback devices (testing only) or physical disks.- The base device is the lowest-level object. This is the thin pool itself.You can examine it using

docker info. It contains a filesystem. This basedevice is the starting point for every image and container layer. The basedevice is a Device Mapper implementation detail, rather than a Docker layer. - Metadata about the base device and each image or container layer is stored in

/var/lib/docker/devicemapper/metadata/in JSON format. These layers arecopy-on-write snapshots, which means that they are empty until they divergefrom their parent layers. - Each container’s writable layer is mounted on a mountpoint in

/var/lib/docker/devicemapper/mnt/. An empty directory exists for eachread-only image layer and each stopped container.

Each image layer is a snapshot of the layer below it. The lowest layer of eachimage is a snapshot of the base device that exists in the pool. When you run acontainer, it is a snapshot of the image the container is based on. The followingexample shows a Docker host with two running containers. The first is a

ubuntucontainer and the second is a busybox container.Windows Partition Manager Ext4

How container reads and writes work with devicemapper

Reading files

With

devicemapper, reads happen at the block level. The diagram below showsthe high level process for reading a single block (0x44f) in an examplecontainer.An application makes a read request for block

0x44f in the container. Becausethe container is a thin snapshot of an image, it doesn’t have the block, but ithas a pointer to the block on the nearest parent image where it does exist, andit reads the block from there. The block now exists in the container’s memory.Writing files

Writing a new file: With the

devicemapper driver, writing new data to acontainer is accomplished by an allocate-on-demand operation. Each block ofthe new file is allocated in the container’s writable layer and the block iswritten there.Updating an existing file: The relevant block of the file is read from thenearest layer where it exists. When the container writes the file, only themodified blocks are written to the container’s writable layer.

Deleting a file or directory: When you delete a file or directory in acontainer’s writable layer, or when an image layer deletes a file that existsin its parent layer, the

devicemapper storage driver intercepts further readattempts on that file or directory and responds that the file or directory doesnot exist.Writing and then deleting a file: If a container writes to a file and laterdeletes the file, all of those operations happen in the container’s writablelayer. In that case, if you are using

direct-lvm, the blocks are freed. If youuse loop-lvm, the blocks may not be freed. This is another reason not to useloop-lvm in production.Device Mapper and Docker performance

allocate-on demandperformance impact:Thedevicemapperstorage driver uses anallocate-on-demandoperation toallocate new blocks from the thin pool into a container’s writable layer.Each block is 64KB, so this is the minimum amount of space that is usedfor a write.- Copy-on-write performance impact: The first time a container modifies aspecific block, that block is written to the container’s writable layer.Because these writes happen at the level of the block rather than the file,performance impact is minimized. However, writing a large number of blocks canstill negatively impact performance, and the

devicemapperstorage driver mayactually perform worse than other storage drivers in this scenario. Forwrite-heavy workloads, you should use data volumes, which bypass the storagedriver completely.

Performance best practices

Keep these things in mind to maximize performance when using the

devicemapperstorage driver.- Use

direct-lvm: Theloop-lvmmode is not performant and should neverbe used in production. - Use fast storage: Solid-state drives (SSDs) provide faster reads andwrites than spinning disks.

- Memory usage: the

devicemapperuses more memory than some other storagedrivers. Each launched container loads one or more copies of its files intomemory, depending on how many blocks of the same file are being modified atthe same time. Due to the memory pressure, thedevicemapperstorage drivermay not be the right choice for certain workloads in high-density use cases. - Use volumes for write-heavy workloads: Volumes provide the best and mostpredictable performance for write-heavy workloads. This is because they bypassthe storage driver and do not incur any of the potential overheads introducedby thin provisioning and copy-on-write. Volumes have other benefits, such asallowing you to share data among containers and persisting even when norunning container is using them.

- Note: when using

devicemapperand thejson-filelog driver, the logfiles generated by a container are still stored in Docker’s dataroot directory, by default/var/lib/docker. If your containers generate lots of log messages, this may lead to increased disk usage or the inability to manage your system dueto a full disk. You can configure a log driver to store your containerlogs externally.

Related Information

container, storage, driver, device mapperCreate and work on an ext4 filesystem image inside of macOS using Docker for Mac. Use this method if you aren't using external USB drives and/or don't want to install a fat VM like VirtualBox.

1. Install Docker

Follow the steps outlined on Docker's website.

Download Docker for Mac directly.

2. Create a working folder

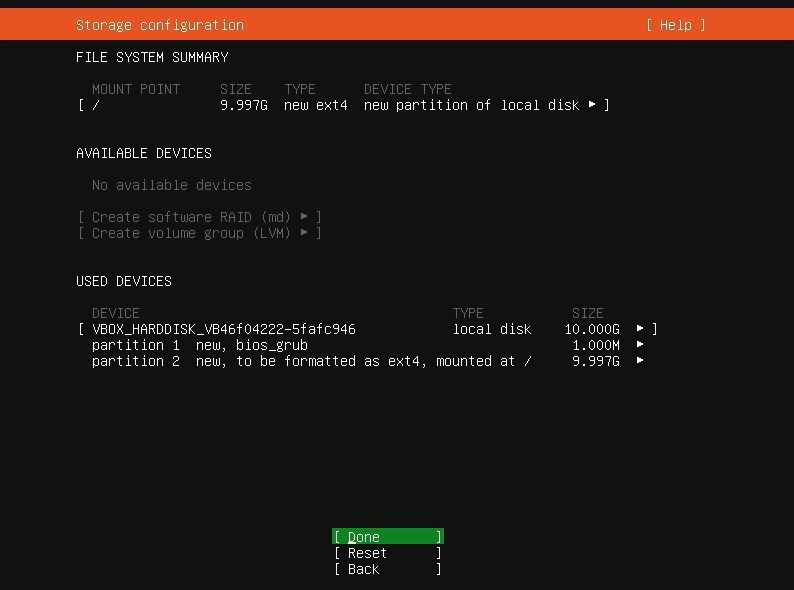

Pick a directory to start in: e.g.

mkdir -p $HOME/ext4fs3. Run an Ubuntu container

Run the container in privileged mode and mount the directory you just created:

4. Create a raw disk image

Create a blank file and format it as ext4:

5. Verify the image is mounted

Enter the directory where the image is mounted to see if it formatted correctly:

6. Profit

When finished, you can unmount the directory (

umount /ext4fs) and exit the container. Then on your host filesystem you'll have a shiny new raw ext4 image which you can then use other tools to convert to other image types, e.g. VirtualBox, VMWare, etc., using tools like Packer.Note: this method does not mount external drives, as currently USB devices aren't supported in Docker for Mac. You may be able to mount partitions or other disk images, if you copy them into and mount another directory alongside your ext4 filesystem:

You can then use any tools available to Ubuntu to work on other disk images or partitions while inside the container, having full read-write access to your ext4 partition.

Note: your mounted filesystem will not be visible to the host OS, as it's mounted in a loopback inside the container's OS.